Generative Engine Optimization, often shortened to GEO, is becoming a useful way to describe a new layer of search visibility work. Traditional SEO still matters, but it is no longer the whole picture. As AI systems increasingly summarize answers instead of sending users to a list of ten blue links, websites also need to think about machine readability, content structure, and whether their pages can be extracted, understood, and cited accurately.

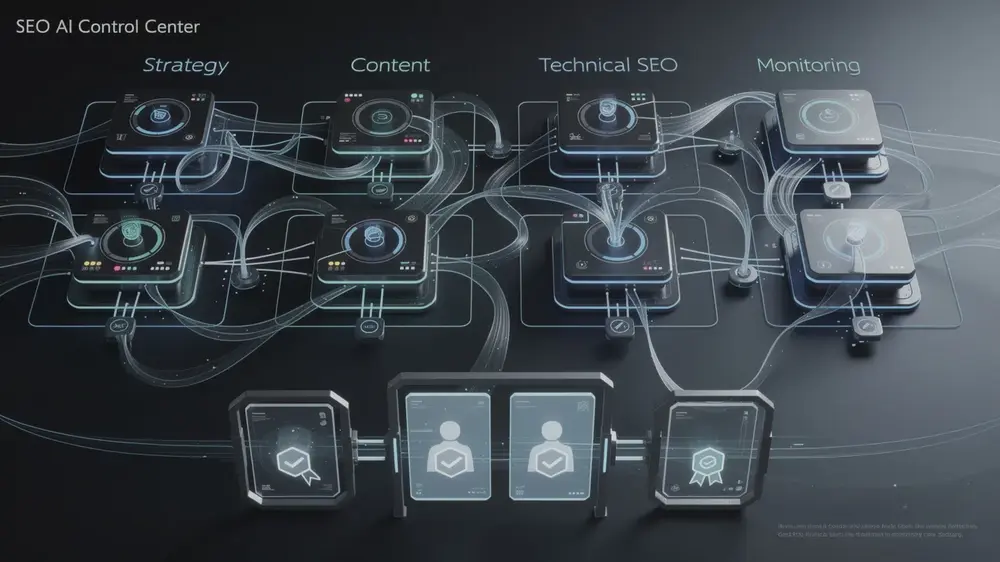

The term itself is still evolving, and different teams use it in slightly different ways. In practice, most GEO work overlaps with technical SEO, structured content design, rendering decisions, schema implementation, and log-based crawl analysis. The central question is simple: can AI systems reliably access and interpret what your site is trying to say?

- GEO focuses on how AI systems read, extract, and cite content, while traditional SEO is still centered on indexing and ranking in search results.

- Rendering choices matter. Sites that depend too heavily on client-side JavaScript can create accessibility and interpretation problems for some crawlers and retrieval systems.

- Clean semantic HTML, structured data, internal linking, and controlled indexation make content easier to process at scale.

- Log file analysis can reveal how different bots spend crawl time across page types and where technical waste is happening.

- Teams that treat AI visibility as both an editorial and infrastructure issue are usually in a better position than teams that approach it as a copywriting trend alone.

What Changed and Why It Matters

Search behavior is changing in a way that affects both publishers and service businesses. In many cases, users now encounter summaries, synthesized answers, or AI-assisted result layers before they ever reach a traditional list of ranked pages. That does not mean classic SEO has stopped working. It means the path between a question and a source page is becoming less direct.

For site owners, this creates a new visibility problem. A page can still rank reasonably well in traditional search and yet fail to appear in AI-generated summaries if the content is difficult to retrieve, poorly structured, or buried behind rendering patterns that make machine access less reliable. That is where GEO becomes a practical concept rather than just a trendy label.

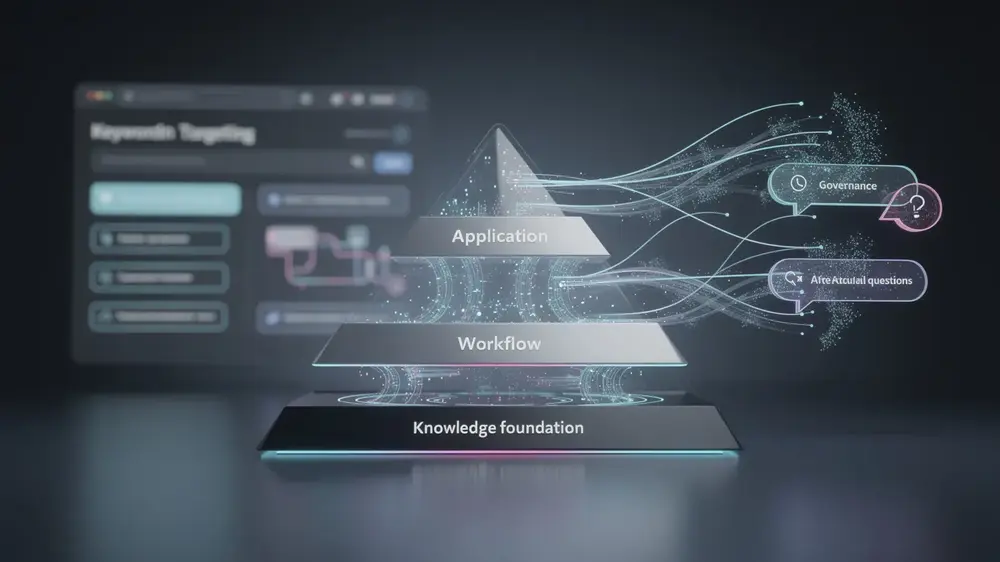

The most useful way to think about GEO is not as a replacement for SEO, but as an extension of it. Technical accessibility, structured meaning, and source clarity all matter more when an AI system is deciding whether a page is worth extracting or citing. In that environment, a site is no longer judged only by ranking position. It is also judged by whether its content can be processed cleanly.

This matters most for organizations building content-heavy sites, large e-commerce architectures, SaaS documentation hubs, and JavaScript-heavy front ends. In those environments, technical decisions made early in development can affect visibility much later, long after the design work looks finished.

Where GEO Connects With Technical SEO

Much of what is now being discussed under the GEO label is familiar to experienced technical SEO practitioners. Rendering, crawl efficiency, semantic structure, canonical control, structured data, and internal linking have always shaped how machines process content. What is different now is the growing importance of systems that do not simply index pages for ranking, but extract pieces of meaning for answer generation.

That shift makes some older technical issues more expensive to ignore. A site with fragmented architecture, excessive index bloat, weak heading structure, or important copy hidden behind client-side rendering may still function for users, but it becomes harder for automated systems to interpret consistently.

Rendering and Machine Readability

Rendering is one of the clearest examples. If core content only appears after heavy client-side JavaScript execution, some systems may not process that page as reliably as a server-rendered or statically generated alternative. This does not mean every JavaScript site will fail, and it does not mean server-side rendering is automatically the right answer for every project. It does mean that teams should test whether meaningful content is visible in the initial response, whether headings and body copy are present in accessible HTML, and whether critical information depends too heavily on front-end execution.

Semantic Structure and Extraction

Well-structured content is easier to extract. Clear heading hierarchies, descriptive section breaks, consistent lists, tables where appropriate, and tightly written paragraphs all increase the odds that a machine can interpret the page correctly. This is one reason technical SEO practice and content design now overlap more than they used to.

Schema and Context Signals

Structured data does not solve every visibility problem, but it helps clarify what a page contains. Article, FAQPage, Product, Organization, and Review markup can reinforce the context around the page when used appropriately. In a broader machine-readability strategy, schema works best as a supporting signal rather than a substitute for strong content structure.

Which Sites Face the Most Risk

Not every website is exposed in the same way. Small static sites with clear HTML and focused architecture may already be easier for automated systems to process than larger sites with complex rendering pipelines. The greatest risk tends to appear where technical complexity and content scale collide.

Single Page Applications are a common example. They can deliver smooth user experiences, but if key content depends on client-side rendering, testing becomes essential. What looks complete in a browser may not be equally accessible to every crawler or retrieval system. That gap can lead to inconsistent visibility.

Large e-commerce sites face a different kind of risk. Faceted navigation, parameterized URLs, tag archives, thin filtered pages, and low-value attachment paths can consume crawl resources and spread signals across pages that contribute little value. The result is not always dramatic at first. Often it shows up slowly through weak indexing patterns, diluted authority, and difficulty getting the right pages surfaced consistently.

Publishers and content-heavy brands face their own version of the same problem. If articles are duplicated across archives, categorized poorly, internally disconnected, or wrapped in weak semantic structure, AI systems may struggle to identify which page is the most useful source. For that reason, broader AI search optimization strategy should start with infrastructure and information architecture, not just prompt design or content production volume.

From an editorial point of view, the real risk is not that AI search suddenly makes every old SEO practice useless. The bigger risk is that teams keep publishing into technical environments that are hard for machines to interpret, then misread the visibility problem as a content problem only. In many cases, the issue starts lower in the stack.

Practical Steps Teams Can Take Now

The best response is usually not a dramatic rebuild. It is a disciplined technical review followed by targeted improvements. Start with the pages that matter most commercially or editorially and test whether they are easy to retrieve, easy to parse, and easy to understand.

Run a Machine Readability Audit

Check whether primary content appears in accessible HTML, whether heading levels are used logically, whether important copy is delayed behind scripts, and whether the page still makes sense when stripped back to its structural elements. If the answer is no, that is a stronger signal than any trend report.

Clean Up Indexation and Architecture

Review low-value URLs, duplicate archives, unnecessary parameters, thin utility pages, and orphaned content. The goal is not to shrink the site blindly. The goal is to reduce noise so systems spend more time on the pages that deserve to be discovered. This is also where robots.txt and crawl control decisions should be reviewed carefully rather than treated as set-and-forget tasks.

Improve Structured Content Design

Organize articles into clear sections with descriptive headings, concise answers, supporting detail, and clean internal connections. If a page explains a process, use steps. If it compares options, use comparison blocks or tables. If it answers recurring questions, use FAQ structure only when the content adds real value. Pages become easier to extract when the information is presented in predictable, readable patterns.

Use Log Data, Not Assumptions

Log file analysis is one of the most practical tools available here. It helps teams see which bots request which pages, how often they return, where crawl effort is wasted, and whether important sections of the site are being reached consistently. Assumptions about crawler behavior are often less useful than a month of clean server data.

- Prioritize high-value templates first: product pages, core landing pages, evergreen articles, and documentation hubs.

- Reduce low-value indexable clutter before expanding content output.

- Test rendering and extraction on representative pages instead of relying on one sitewide assumption.

- Review schema implementation as support for meaning, not as a shortcut to visibility.

- Keep fact-checking and editorial review in place for all AI-assisted workflows.

Signals Worth Monitoring in 2026

Because this area is still developing, teams should be careful not to build strategy on confident language alone. What matters is what can be observed over time. A useful GEO workflow depends on watching technical and editorial signals together rather than betting everything on one new acronym.

Crawler Access and Rendering Performance

Watch whether important templates are being requested consistently, whether rendered content is accessible in the response path, and whether performance bottlenecks are delaying meaningful content delivery. In practice, improvements in site speed and rendering performance often support both human usability and machine processing.

Citation Patterns and Source Selection

Where possible, compare which pages tend to be cited, summarized, or ignored across AI-assisted environments. The goal is not to chase every mention. It is to understand what kinds of pages are treated as extractable, trustworthy, and structurally clear enough to be used as sources.

Organizational Readiness

The teams that adapt most effectively are usually the ones that stop treating SEO, content, and development as separate conversations. GEO is still an emerging label, but the operational lesson is already clear: content visibility now depends more heavily on coordination between editorial planning, technical implementation, and ongoing measurement.