Zero-click search has passed the two-thirds threshold, with more than 66% of Google searches now ending without a visit to any external site, a structural shift that Rand Fishkin traces back to 2011 and attributes to Google’s post-IPO pivot toward user retention over referral traffic. AI overviews are accelerating the trend, but publishers and SEO professionals are dealing with a platform dynamic that has been compounding for well over a decade.

- Zero-click search is not an AI-era problem. It crossed 50% by 2018 and now exceeds 66%, reflecting 15 years of deliberate platform design rather than a recent technology shift.

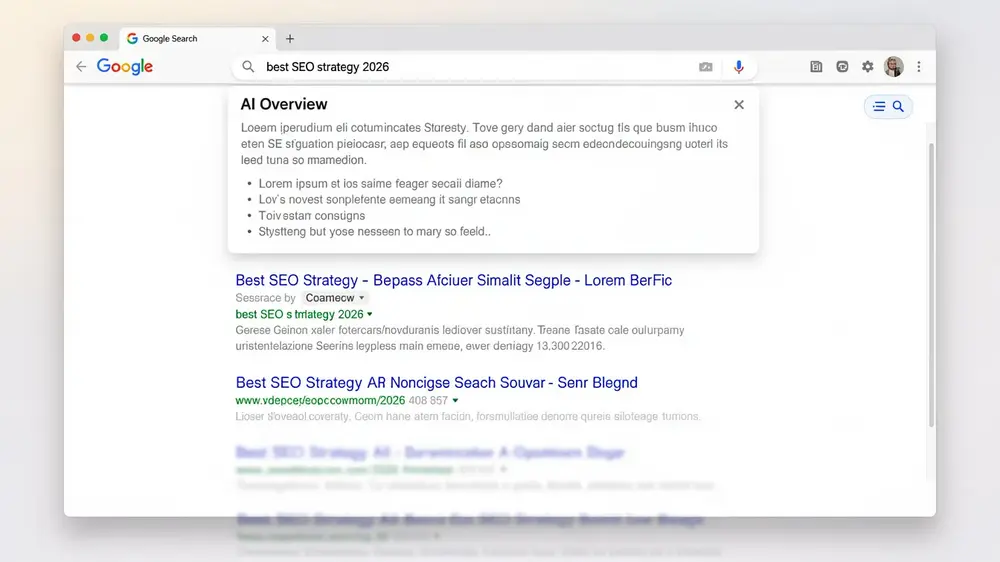

- AI-generated answers vary significantly across repeated queries, making consistency testing across roughly 10 submissions a practical way to assess reliability, particularly for health and finance topics.

- Publishers that allowed unrestricted crawling without negotiating content licensing now have limited leverage, as Google controls their visibility while retaining users within its own properties.

- Subscription and direct audience models, as demonstrated by The New York Times, offer a more structurally resilient alternative to search referral dependency.

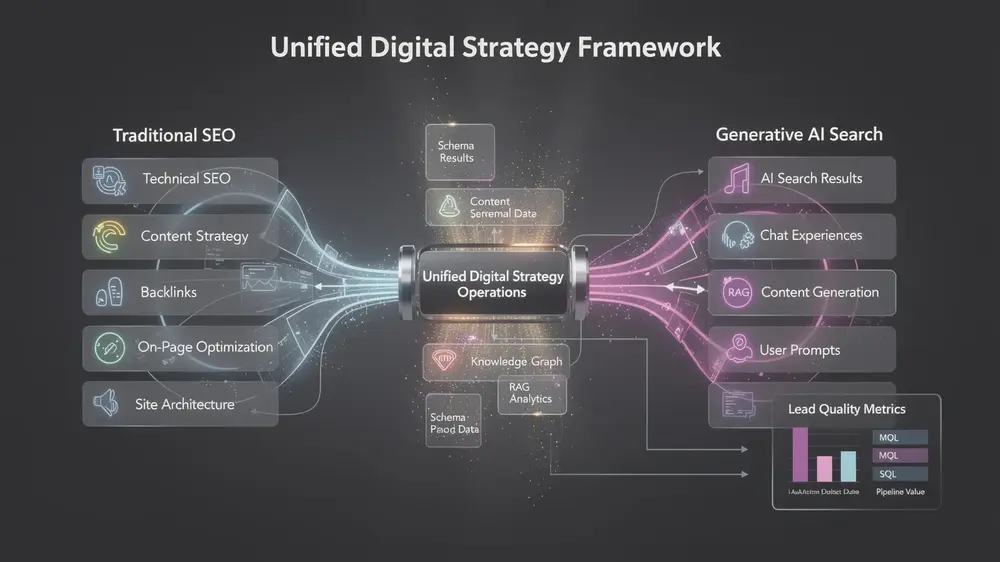

- Structured data implementation and strong on-page fundamentals remain the most durable technical responses, as content quality and relevance are the signals least likely to be devalued across algorithm shifts.

What Changed and Why It Matters

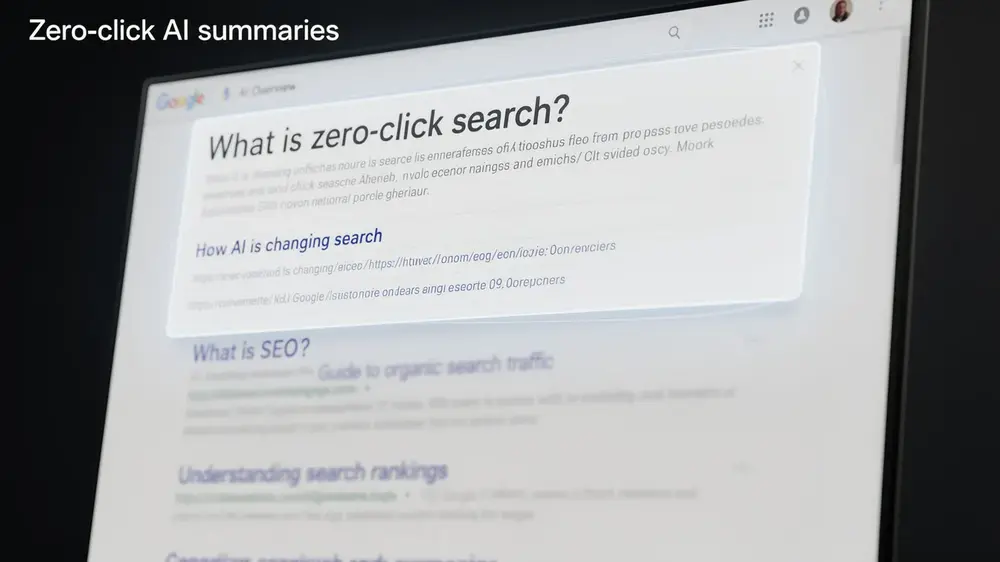

Zero-click search is not a recent problem created by AI overviews. It has been steadily eroding organic traffic for well over a decade. According to Rand Fishkin’s timeline, the pattern began around 2011 with simple on-page features such as weather boxes and calculators. By 2016 to 2017, roughly half of all Google searches were ending without a click to an external site. That figure crossed 50% by 2018, and today it exceeds 66%, meaning more than two-thirds of searches now keep users entirely within Google’s own properties.

The underlying driver was not a sudden technology shift. Google’s post-IPO priorities around growth and advertising revenue gradually pushed the platform toward retaining users rather than routing them to publishers. Each incremental feature, from knowledge panels to featured snippets, moved the needle a little further in that direction. AI overviews are the latest and most visible step, but they arrived on top of a trend that was already well established.

For publishers and site owners, the more sobering point is about timing. There was a window, roughly 15 to 20 years ago, when content creators might have collectively negotiated payment arrangements or placed meaningful limits on how their content could be crawled and displayed. That window has largely closed. Understanding how modern SEO fundamentals have evolved alongside these platform changes is now essential context for anyone trying to build a sustainable traffic strategy.

The zero-click trend is a structural shift that predates AI by years, which means strategies built around recovering lost referral traffic are likely addressing the symptom rather than the cause. Publishers who frame this as a recent disruption risk underestimating how deeply the incentive gap between Google and content creators has already widened. The more useful question is not how to reverse the trend, but how to build around it. (Hyogi Park, MOCOBIN)

Key Confirmed Details from Fishkin’s Interview

Rand Fishkin’s interview lays out a clear timeline for zero-click search growth that predates generative AI entirely. Roughly half of all searches produced no outbound click by 2016 to 2017. That figure crossed the majority threshold by 2018, and today over two-thirds of searches end without a user visiting any external site. This is a 15-year structural shift, not a side effect of recent AI features, and it has direct implications for how publishers and site owners should interpret traffic declines.

The variability of AI-generated answers adds a separate layer of risk. Unlike traditional search results, which tend to return consistent rankings for the same query, AI answers can differ substantially from one attempt to the next. Fishkin flags this as a particular concern for health and finance queries, where a single misleading response can carry real consequences. His practical recommendation is to submit the same question across roughly 10 separate queries and look for patterns. Answers that appear consistently are more likely to reflect reliable information than those that surface only once.

On Google’s broader trajectory, Fishkin connects the platform’s post-IPO shift to a reorientation toward investor expectations and revenue growth. That shift made the ecosystem progressively harder for publishers, even as ordinary users continued preferring Google over alternatives like Bing. For anyone tracking how Google’s core update changes affect organic visibility, this context helps explain why publisher-friendly signals have steadily lost ground to monetisation priorities.

Who Is Affected and the Main Implications

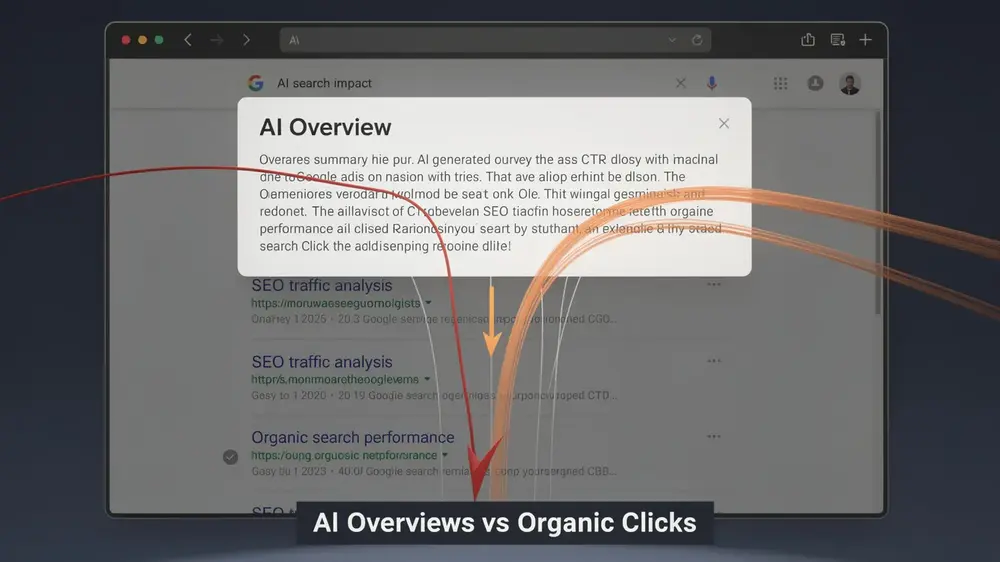

The shift toward zero-click search and AI-generated answers does not affect all publishers equally, but the pressure is broadly felt. Large media publishers and news sites that allowed unrestricted crawling without negotiating content licensing agreements now find themselves with minimal leverage. Google controls their visibility while retaining users within its own properties, leaving those publishers dependent on a platform that has little structural incentive to send traffic outward.

Independent creators and smaller sites face a sharper version of the same problem. Traffic distribution is far less flat than it was in the early web era, when opportunity was more evenly spread across sites. Platform control has tightened considerably, and smaller operations rarely have the resources to negotiate or diversify quickly.

For SEO professionals and site owners in traffic-reliant niches, the practical response involves moving away from click-volume metrics as the primary measure of success. Optimizing for user intent rather than raw organic traffic is becoming a more durable foundation, as traditional referral-based models grow less reliable.

The New York Times is frequently cited as a workable adaptation model. Rather than depending on search referrals, it has built a subscription business that monetizes sustained reader attention directly. That path is not available to every publisher, but the underlying principle, reducing dependency on Google as a traffic source, applies broadly across the industry.

Practical Response and Next Steps

The clearest takeaway from the current search landscape is that waiting for conditions to return to normal is not a strategy. Organic referral traffic from Google has been declining steadily, and the rise of AI-generated answers accelerates that trend. The practical response is to build structures that do not depend on that traffic to survive.

Revenue diversification comes first. Publishers like The New York Times operate within search ecosystems without being dependent on them, generating income through subscriptions and direct audience relationships. That model is worth studying regardless of your site’s scale.

Optimize for Zero-Click Visibility

Appearing in featured snippets or AI-generated answers may be the only visibility opportunity for many queries. Implementing structured data and schema markup gives search systems clearer signals about your content, improving the chances of inclusion in these surfaces even when no click follows.

Reduce Single-Channel Dependence

For sites covering health, finance, or other sensitive topics, testing AI-generated answer reliability through repeated queries is worth the time. Single outputs can vary significantly, so patterns across multiple responses give a more accurate picture of how your subject area is being handled.

- Build branded search recognition so users seek you directly

- Invest in local SEO and video content as alternative traffic sources

- Monetize through platform ecosystems and direct audience channels

Signals To Watch as the Search Landscape Shifts

Several converging trends deserve close attention from anyone managing organic visibility right now. Platform consolidation is tightening, with fewer powerful entities controlling how information reaches audiences. From an editorial perspective, this resembles pre-internet media concentration, where individual creators produce content inside systems they do not own or govern. The practical implication is that dependency on any single platform carries growing structural risk.

Zero-click search continues its long trajectory. AI-generated overviews and featured snippets have steadily reduced click-through opportunities since 2011, and the March 2026 Google core update appears to be accelerating that pressure specifically for lower-value pages. Sites that rely on thin or redundant content may see measurable traffic declines as a direct result.

Within the SEO community, the conversation around measurement is also changing. AI visibility strategies are gaining traction alongside a newer concept: bot audiences. As automated agents increasingly consume and act on web content, some practitioners are beginning to treat bot interaction volume as a performance signal alongside traditional human click-through rates. Whether this becomes a mainstream metric remains uncertain, but the discussion reflects how broadly the definition of “search success” is being reconsidered.

Traffic distribution patterns remain uneven, and the key open question is whether Google’s user satisfaction stays high enough to prevent meaningful migration toward alternative search engines. Strong on-page SEO fundamentals remain relevant regardless of which platform dominates, precisely because content quality and relevance are the factors least likely to be devalued by any algorithm shift.