Google Search Updates in 2026: What Changed, What Is Confirmed, and What SEOs Should Do

Google’s March 2026 search updates have renewed attention on a familiar question: what actually matters for rankings when core systems change and spam enforcement becomes more aggressive? The short answer is not that SEO fundamentals stopped working. It is that thin, repetitive, and low-value content has less room to hide, while genuinely useful pages with clear expertise, context, and structure have more room to stand out.

As of this writing, the officially confirmed events are the March 2026 core update and the March 2026 spam update. That distinction matters. In periods of high volatility, third-party commentary often moves faster than Google’s own documentation. For site owners, the safest approach is to separate confirmed update details from industry interpretation, then compare both against real performance data in Search Console.

- Google officially confirmed a March 2026 core update and a March 2026 spam update, both of which contributed to ranking volatility.

- This is not evidence that one new ranking factor suddenly replaced all others. Instead, it points to continued refinement in how Google evaluates helpfulness, trust, originality, and usefulness.

- E-E-A-T remains an important evaluation framework for content quality, but it should not be treated as a single mechanical ranking signal.

- Sites publishing generic, scaled, or weakly edited pages are more exposed than sites that show real expertise, clearer authorship, and stronger topical focus.

- The most practical response is not panic editing. It is page-by-page review: improve weak content, tighten structure, and monitor patterns in Search Console over the full rollout window.

What Changed and Why It Matters

Google’s update cycle in March 2026 was notable not because it introduced an entirely new rulebook, but because it reinforced the direction Google has been signaling for years. Helpful, reliable, people-first content remains the target. Pages built mainly to capture search traffic without offering much original value are under more pressure when core systems and spam systems move in close succession.

The March 2026 spam update began on 24 March 2026 and completed on 25 March 2026. Soon after, the March 2026 core update began on 27 March 2026 and completed on 8 April 2026. That sequence likely contributed to the unusually strong sense of volatility many publishers reported across informational and commercial-intent queries.

For SEOs, the important takeaway is not to reduce everything to one headline like “E-E-A-T now runs Google.” That is too simplistic. A better reading is that Google’s systems continue to reward pages that feel specific, trustworthy, and genuinely written for users, while broad, interchangeable content has become easier to discount.

This is also happening in a search environment where users are increasingly seeing direct answers, summaries, and richer result formats before they click. That does not make rankings irrelevant, but it does change what success looks like. In some query spaces, strong visibility now depends not only on ranking position, but also on whether your page is clear enough to be understood, extracted, and trusted.

If you are new to the broader context, the MOCOBIN front page is a useful starting point for navigating related articles on SEO basics, technical SEO, and algorithm update coverage.

What Is Officially Confirmed About the 2026 Google Updates

When discussing algorithm updates, the first step is separating confirmed facts from interpretation. Based on Google’s Search Status Dashboard, two search-related events are clearly confirmed for this period.

- March 2026 spam update: released on 24 March 2026 and completed on 25 March 2026.

- March 2026 core update: released on 27 March 2026 and completed on 8 April 2026.

That confirmed timeline is enough to explain why many sites saw movement during late March and early April. It does not automatically prove that every ranking change came from one specific new factor, one AI system, or one isolated content pattern. Core updates are broad by design, and Google consistently describes them in terms of improving how its systems assess relevance and quality overall.

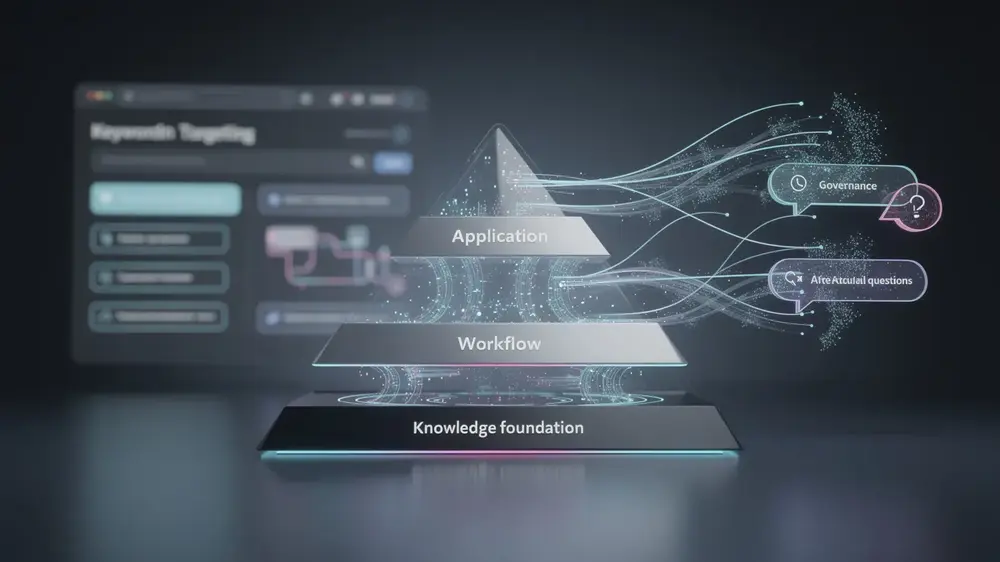

This is where many update summaries go too far. It is reasonable to say that trust, usefulness, and content quality matter more when broad core systems are recalibrated. It is less reasonable to claim that Google has officially declared E-E-A-T to be the single dominant ranking factor. That is not how Google describes it. For creators working through on-page SEO fundamentals, the smarter approach is to treat E-E-A-T as a quality lens that helps shape better content, not as a shortcut formula.

The same caution applies to AI content. Google has said that the focus is on quality and usefulness, not on whether a page was created with AI assistance. The higher-risk pattern is scaled content that adds little value, not AI use by itself.

How These Updates Affect Different Types of Sites

Not every site is affected in the same way. In practice, the dividing line is often less about niche or business model and more about content quality thresholds. Pages that are generic, repetitive, and only lightly edited tend to become more vulnerable during broad recalibration periods. Pages that are more specific, better sourced, and clearly written for a real audience tend to hold up better over time.

Independent publishers and specialist sites can benefit when they publish content that reflects real experience, narrow topical focus, and visible editorial care. A smaller site does not need to out-scale larger competitors if it can be more useful, more precise, or more trustworthy on the query it targets.

Large content publishers face a different risk. When production systems prioritize volume over editorial depth, entire sections can become filled with pages that sound polished but say very little. Those are exactly the kinds of pages that tend to lose resilience during update periods.

E-commerce and local business sites are not immune either. Informational traffic becomes harder to defend when category pages and support content are vague, thin, or poorly structured. In those cases, improving headings, FAQs, schema, and factual clarity can help pages communicate value more clearly. For a broader look at the search environment around answer-first interfaces, see this article on AI-driven search shifts.

- SaaS and niche B2B publishers: usually benefit from stronger topical depth, original examples, and clearer demonstration of first-hand expertise.

- Affiliate and comparison sites: need stronger editorial standards, more transparent evaluation criteria, and clearer signals that pages were reviewed by someone who understands the subject.

- Mass-content operations: are more exposed when many pages follow the same structure, repeat the same claims, or add little beyond what already exists elsewhere.

What E-E-A-T Means in Practice, Not in Theory

E-E-A-T is often discussed in abstract terms, but it becomes more useful when translated into page-level decisions. A strong page does not just mention expertise. It shows why the writer or site is worth trusting, how claims were checked, and what unique value the page adds beyond a surface summary.

In practical SEO work, that usually means things like:

- clear authorship and a consistent editorial voice

- examples, observations, or comparisons that reflect first-hand review or real analysis

- claims framed with appropriate caution when Google has not officially confirmed a detail

- references to primary sources where possible, especially for update timelines and policy guidance

- content structure that helps both readers and search systems understand what the page is trying to answer

That is also why technical polish still matters, but within limits. Good structure, clean headings, and structured data markup can help search engines interpret the page more clearly. At the same time, no markup or UX tweak can compensate for content that feels copied, padded, or disposable.

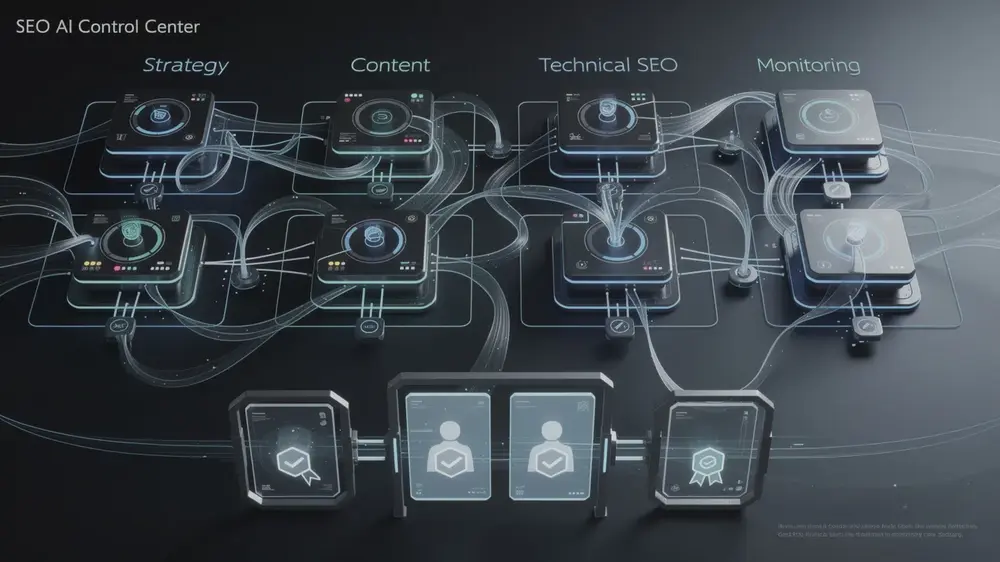

Practical Response: What Site Owners Should Do Now

When rankings move during a core update, the temptation is to rewrite everything at once. That is rarely the best response. A calmer and more reliable method is to review page groups, identify patterns, and improve the weakest areas first.

1. Recheck weak pages before rewriting strong ones

Start with pages that were already underperforming before the update. In many cases, the update did not create the weakness. It simply exposed it more clearly. Look for pages with shallow coverage, repetitive phrasing, no clear author perspective, weak supporting details, or search-intent mismatch.

2. Improve specificity, not just length

Longer content is not automatically better. In my experience, pages recover more often when vague sections are replaced with clearer explanations, tighter examples, and better structure, not when another 800 words are added for the sake of word count.

3. Strengthen trust signals where they matter most

If a page makes strategic recommendations, explains ranking systems, or interprets official guidance, it should show stronger editorial care. That includes cleaner sourcing, more careful phrasing, and fewer sweeping claims that cannot be verified.

4. Review structure for extractability and clarity

Use headings that match real search questions. Break dense paragraphs into lists where appropriate. Keep definitions, timelines, and action steps easy to scan. For sites building out foundations, a stronger grasp of technical SEO principles can make those improvements easier to implement consistently.

5. Monitor changes by page type in Search Console

- Compare pre-update and post-update performance by section, not just at site level.

- Track impressions, clicks, average position, and query mix for pages that gained or lost visibility.

- Watch whether declines are concentrated in informational pages, commercial pages, or one content template.

- Use the full rollout window as your baseline rather than reacting to a single volatile day.

Signals To Watch Over the Next Few Weeks

Once a core update finishes rolling out, the immediate panic usually fades, but the useful work starts after that. What matters next is whether patterns settle in a way that reveals a real site quality issue, or whether short-term movement reverses once the system stabilizes.

- Page groups that consistently lose impressions: this can point to template weakness, shallow editorial standards, or weak search-intent alignment.

- Queries where impressions remain but clicks fall: this may reflect changes in SERP features, richer answers, or lower snippet appeal rather than a pure ranking collapse.

- Sections with low differentiation: pages that sound too similar to each other or too similar to competitors are worth reviewing first.

- Authorship and sourcing gaps: if a page makes confident claims without showing who reviewed it or what it relies on, that is often worth fixing even before deeper rewrites begin.

During update periods, the most common mistake is overreacting to the headline and under-reviewing the page itself. A careful audit of weak sections, thin templates, and unsupported claims usually tells you more than broad industry panic ever will. Hyogi Park, MOCOBIN

For official confirmation during future volatility, the Google Search Status Dashboard should remain your first checkpoint before drawing broader conclusions.

- Google Search Status Dashboard – March 2026 spam update

- Google Search Status Dashboard – March 2026 core update

- Google Search Central – Google Search’s Core Updates

- Google Search Central – Creating helpful, reliable, people-first content

- Google Search Central – Guidance on using generative AI content

- Google Search Central – Understanding page experience in Google Search results

- Google Search Central – A guide to Google Search ranking systems