Generative AI reached 53% global adoption within three years of ChatGPT’s launch, according to Stanford’s 2026 AI Index, a pace that outstripped both personal computers and the internet at comparable stages. For SEO professionals and publishers, the practical consequence is already visible in Google’s aggressive expansion of AI Overviews to 1.5 billion monthly users and AI Mode to 75 million daily active users, both of which are reshaping how organic traffic is distributed.

- Generative AI hit 53% global adoption by 2025, but the figure bundles casual and heavy users and should be read as directional rather than precise.

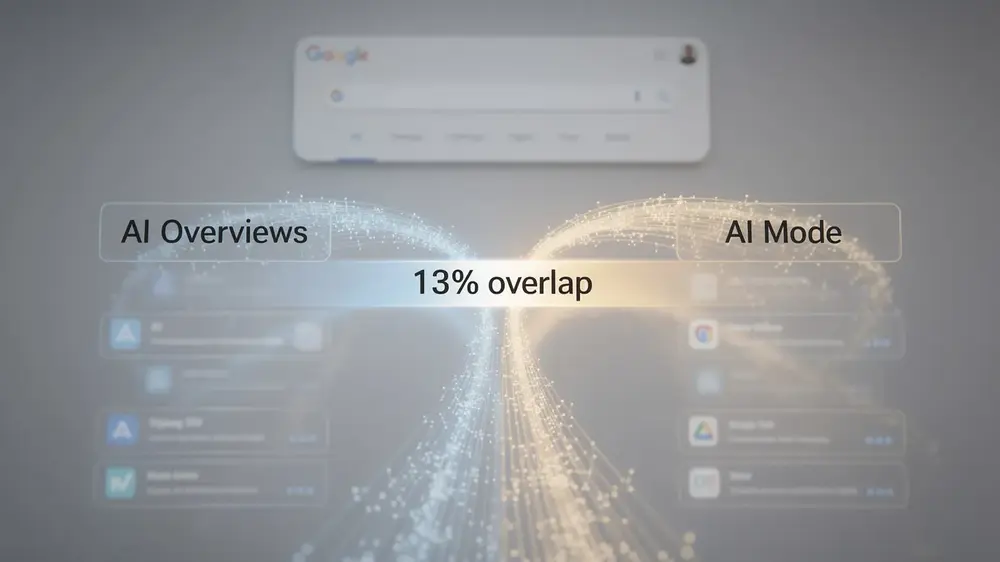

- Google AI Overviews and AI Mode share only 13% URL overlap for identical queries, meaning the two surfaces require separate optimization strategies.

- The Foundation Model Transparency Index fell from 58 to 40 in one year, making it harder to reason about why specific content earns AI citations.

- Google Search Console does not yet separate AI-driven feature performance from standard organic metrics, leaving practitioners reliant on third-party query-level tools.

- Content built on original data, firsthand experience, and genuine depth is harder for AI summaries to replicate and better positioned as zero-click searches continue to rise.

What Changed and Why It Matters

Generative AI reached 53% global adoption within three years of ChatGPT’s November 2022 launch, according to Stanford’s 2026 AI Index. That pace outstripped how quickly personal computers or the internet reached comparable adoption levels. One important caveat: this growth rode on decades of existing PC and internet infrastructure, so it did not require consumers to buy new hardware before they could participate.

The adoption curve helps explain why Google moved so aggressively on AI search features throughout 2024 and 2025. AI Overviews expanded to 1.5 billion monthly users by Q1 2025, and AI Mode reached 75 million daily active users by Q3 2025. From a product strategy standpoint, those rollout timelines track closely with the broader adoption surge.

The headline figure does carry real limitations. It does not distinguish between heavy and casual users, and it bundles standalone AI tools together with search-specific applications. U.S. adoption estimates alone range from 28% to 54% depending on how researchers define and measure usage, so the 53% global number should be treated as directional rather than precise.

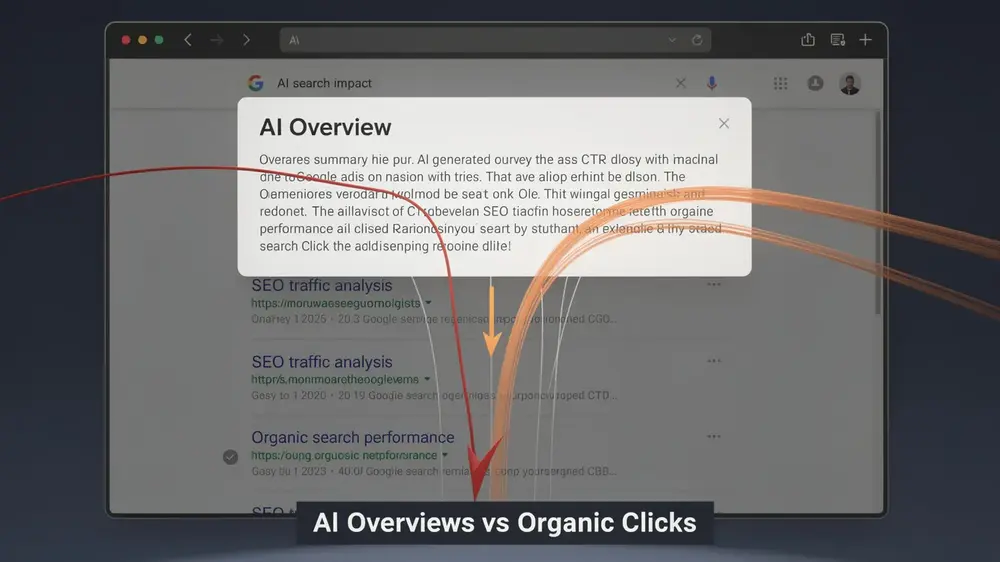

For publishers and site owners, the practical consequence is a measurable rise in zero-click searches. Optimizing for AI-powered search features and answer engine optimization is no longer a future consideration. Traffic dynamics are already shifting, and sites that rely on traditional organic clicks are feeling that pressure now.

Key Confirmed Details from the Stanford AI Index Report

The 400-page Stanford AI Index report presents a picture of AI capability that is simultaneously impressive and uneven. Frontier models now outperform humans on PhD-level science questions and competitive mathematics, and AI agents have improved their success rates on real-world tasks from 20% to 77%. Yet the same models correctly read analog clocks only 50% of the time. The report describes this as a “jagged frontier”, a pattern where exceptional performance in one area coexists with surprising failure in another.

Transparency has moved in the opposite direction. The Foundation Model Transparency Index fell from 58 to 40 in a single year. Google, Anthropic, and OpenAI have each stopped disclosing dataset sizes and training duration. Of 95 notable models launched in 2025, 80 were released without training code. Investment, by contrast, is accelerating sharply. Global corporate AI spending reached $581 billion in 2025, up 130% from the prior year, with over 90% of frontier models now originating from private companies rather than academic institutions.

For search practitioners, the Ahrefs finding carries direct weight. Research comparing Google AI Mode and AI Overviews found only 13% URL overlap for the same queries, meaning the two surfaces are drawing from largely separate citation pools. Google’s Robby Stein has confirmed that AI Overviews are pulled back when engagement signals are low, which points to uneven performance across query contexts. Publishers tracking the shift toward AI-driven search visibility should treat these two surfaces as distinct optimization targets rather than a unified system.

Who Is Affected and What the Implications Are

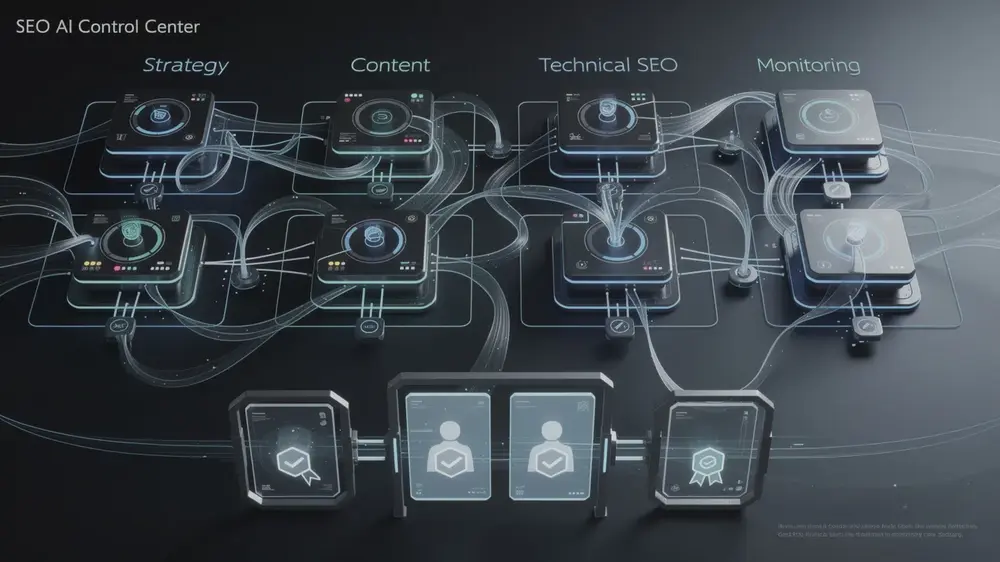

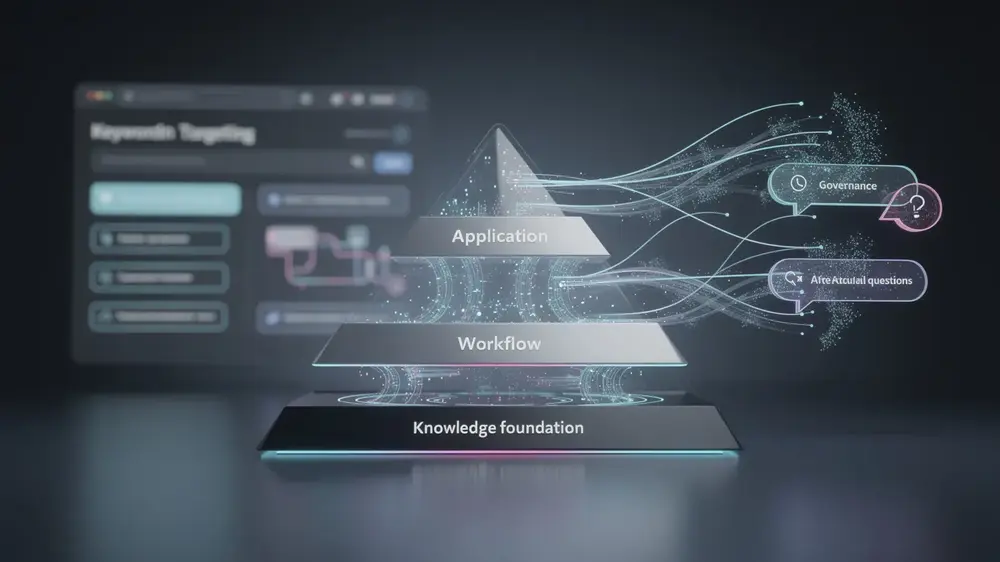

The expansion of AI-driven search features is creating concrete pressure points for several groups, and the effects are already measurable. SEO professionals and site owners are working in a less transparent environment because Google Search Console does not currently separate AI Overview or AI Mode performance from traditional organic search metrics. Optimizing for features whose underlying models are becoming harder to explain is a genuine challenge, and understanding how AI search optimization differs from conventional SEO is increasingly relevant for practitioners trying to adapt.

Publishers and content creators face a related but distinct problem. As AI-generated answers handle more queries directly, zero-click searches are rising, and organic referral traffic is under pressure. The citation patterns across different AI search features are also inconsistent: research shows only 13% URL overlap between different AI search features when answering identical queries, meaning visibility in one feature does not reliably transfer to another.

Workforce data adds a broader dimension. Employment among software developers aged 22 to 25 dropped nearly 20% since 2024, while headcounts for older developers grew. Similar patterns appear in customer service roles. Unemployment is rising across many occupations, including some with low AI exposure, so the picture is not straightforward. The Tufts AI Jobs Risk Index frames this usefully: roles centered on assembling information from existing sources face more displacement pressure than roles requiring judgment, experience, and original analysis. That distinction matters for anyone thinking about where to position their skills or their content strategy.

From an editorial perspective, the combination of falling model transparency and rising investment is worth treating as a structural tension rather than a temporary gap. When the systems shaping search visibility become harder to audit at the same time that spending on those systems accelerates, site owners and practitioners are left optimizing for surfaces they cannot fully inspect. Grounding strategy in durable signals like authority, structured data, and original expertise is a reasonable hedge against that uncertainty. (Hyogi Park, MOCOBIN)

Practical Response and Next Steps

Tracking AI search performance requires more granularity than most teams currently apply. Because the gains and losses across AI Overviews and AI Mode follow what researchers describe as a jagged frontier, query types returning accurate results today may behave differently with slight variations in phrasing or intent. Tools like Ahrefs allow query-level monitoring, which matters here since Google Search Console does not yet provide separated metrics for AI-driven features versus standard organic results.

On the content side, strategist Grant Simmons uses the term golden knowledge to describe material built on original data, firsthand experience, and genuine depth. This type of content is harder for AI summaries to replicate from training data alone, and it targets the gaps where AI reliability remains uneven. Thin pages and purely AI-generated material offer little defence in this environment, and strong benchmark performance by any model does not guarantee reliable results across all query types.

Authority signals beyond the website itself are also worth addressing. Building a consistent presence across social media and business profiles improves AI citation potential, and auditing structured data helps pages qualify for citations within AI-generated responses. For practitioners looking to ground these tactics in core SEO principles, the fundamentals of relevance, authority, and structured markup remain the most durable starting point.

- Monitor at the query level using third-party tools, not just category-level dashboards

- Prioritise E-E-A-T content with original research and firsthand perspective

- Audit structured data and expand off-site authority signals to improve AI citation eligibility

- Identify specific reliability gaps in AI coverage and create content that fills them

Signals To Watch

Several data points in the coming months will clarify whether AI search reliability is genuinely keeping pace with how fast adoption is growing. Each one carries practical weight for site owners and marketers trying to plan around an unusually fluid environment.

Alphabet’s next earnings call is the most immediate checkpoint. The Q1 2025 figure of 1.5 billion monthly users for AI Overviews and the Q3 2025 figure of 75 million daily active users for AI Mode are the last published benchmarks. Updated numbers will show whether growth is accelerating, plateauing, or shifting between products, all of which affect how much traffic is being routed through AI-generated answers rather than traditional blue links.

Google’s March 2026 core update completed roughly one week before the Stanford AI Index report was released. Tracking volatility patterns from that update alongside AI feature expansion will help practitioners understand whether algorithm changes and AI search development are moving in sync or creating friction. Tools that monitor ranking fluctuations, such as those covered in this overview of Ahrefs for SEO analysis, can help surface those patterns at a site level.

The Foundation Model Transparency Index score dropping from 58 to 40 is a separate but related concern. Lower transparency makes it harder to reason about why specific content appears in AI-generated answers. Any new disclosures from major AI firms about training data, parameters, or methods would meaningfully change that picture. Finally, the jagged frontier problem remains unresolved: aggregate benchmark improvements do not predict reliability for the specific query types that matter most to individual businesses.