White hat SEO is the practice of improving search visibility by making a website more useful, accessible, trustworthy, and technically reliable for real users. Black hat SEO takes the opposite path by attempting to manipulate ranking systems through tactics such as cloaking, keyword stuffing, low-quality scaled content, link schemes, and hidden text. The difference is not only ethical. It directly affects how stable a website can be after algorithm updates, spam reviews, and manual quality evaluations.

- White hat SEO builds long-term visibility through useful content, clean technical structure, transparent authorship, and legitimate link earning.

- Black hat SEO relies on shortcuts such as cloaking, link schemes, private blog networks, hidden text, and scaled low-quality content, all of which can lead to ranking loss or manual actions.

- Modern SEO audits should review Core Web Vitals using LCP, INP, and CLS, not the outdated FID metric.

- E-E-A-T is strongest when a page shows real experience, clear expertise, reliable sourcing, accurate updates, and honest limitations.

- AI-assisted SEO tools can support research, structure, and optimization, but final content still needs human review, fact checking, and original insight.

- A sustainable SEO workflow should combine technical audits, search intent analysis, internal linking, content quality review, and safe link acquisition.

What White Hat and Black Hat SEO Mean for Your Website

SEO tactics usually fall into two broad categories: methods that improve a website for users and methods that try to exploit search systems. The first category is white hat SEO. The second is black hat SEO. Both can affect rankings, but only one is designed to protect a site’s long-term value.

How Search Engines Separate Helpful SEO From Manipulative SEO

White hat SEO focuses on making a page easier to crawl, understand, trust, and use. In practice, this means publishing accurate content, matching search intent, using clear headings, improving page speed, adding structured data where appropriate, and earning links because the content deserves to be cited. For a wider foundation, MOCOBIN’s guide to SEO basics explains how search visibility starts with crawlability, relevance, and user value.

Black hat SEO tries to create the appearance of relevance or authority without building the substance behind it. Common examples include keyword stuffing, doorway pages, cloaking, link farms, private blog networks, fake reviews, expired domain abuse, and low-quality scaled content. These tactics may appear to work for a short period, but they create a fragile site profile that can collapse after spam updates, manual reviews, or link quality reassessments.

Why Intent Matters in Modern SEO

The clearest difference between white hat and black hat SEO is intent. White hat SEO asks, “Does this change help users and make the page easier for search engines to understand?” Black hat SEO asks, “Can this change make the algorithm rank the page faster?” That distinction matters because search systems are designed to reduce visibility for pages that exist mainly to manipulate rankings rather than satisfy users.

A practical example is internal linking. A white hat internal link helps users move to a related explanation at the right moment. A manipulative internal link pattern repeats exact-match anchors across many pages only to push ranking signals. The same technical element can be helpful or risky depending on how it is used.

White Hat SEO Checklist: Technical, Content, and Trust Signals

Core Web Vitals and Mobile-First Technical Requirements

White hat SEO starts with technical reliability. Google’s current Core Web Vitals focus on Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS). LCP measures loading performance, INP measures responsiveness after user interaction, and CLS measures visual stability. Since INP replaced FID as the key responsiveness metric, modern technical audits should no longer treat FID as the main interaction signal.

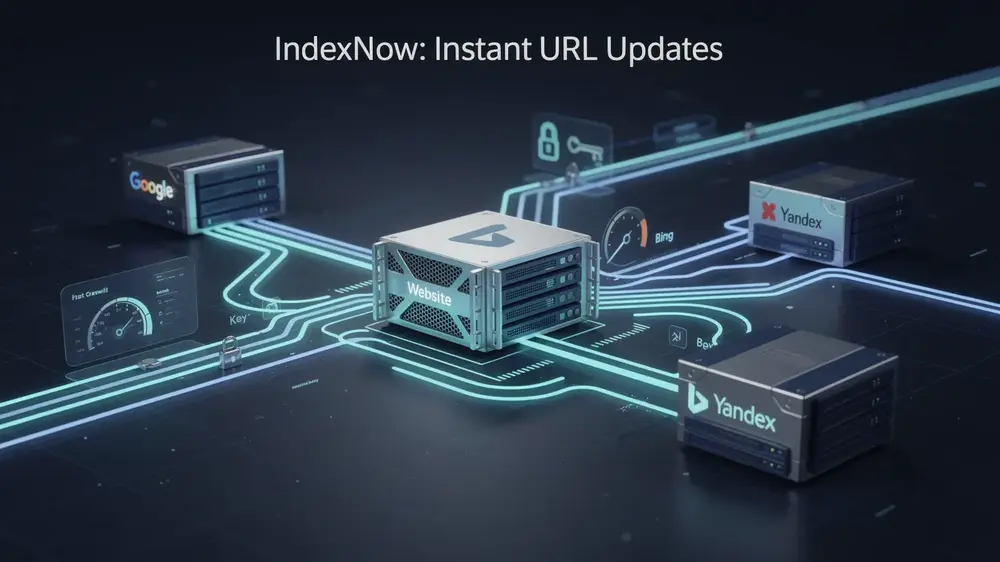

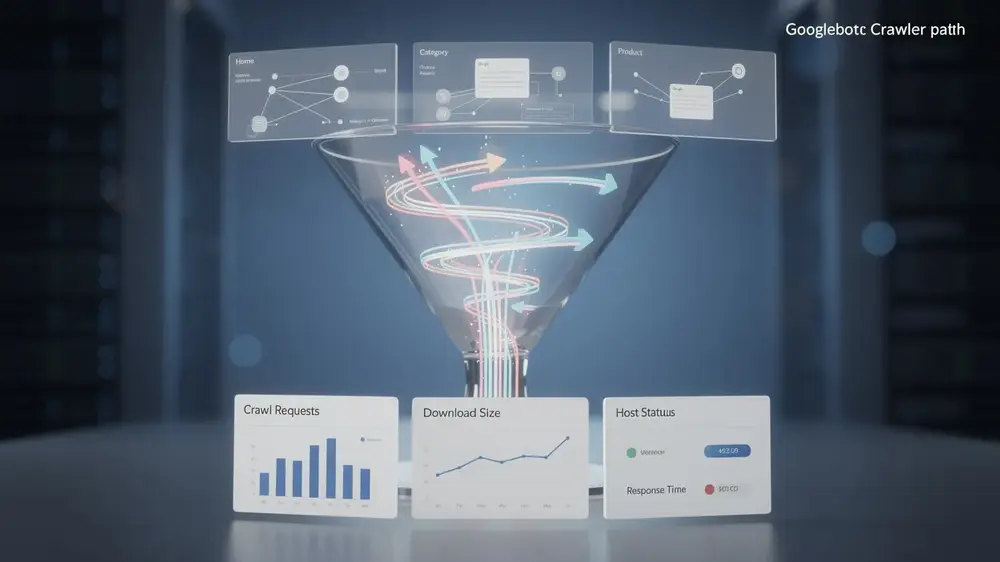

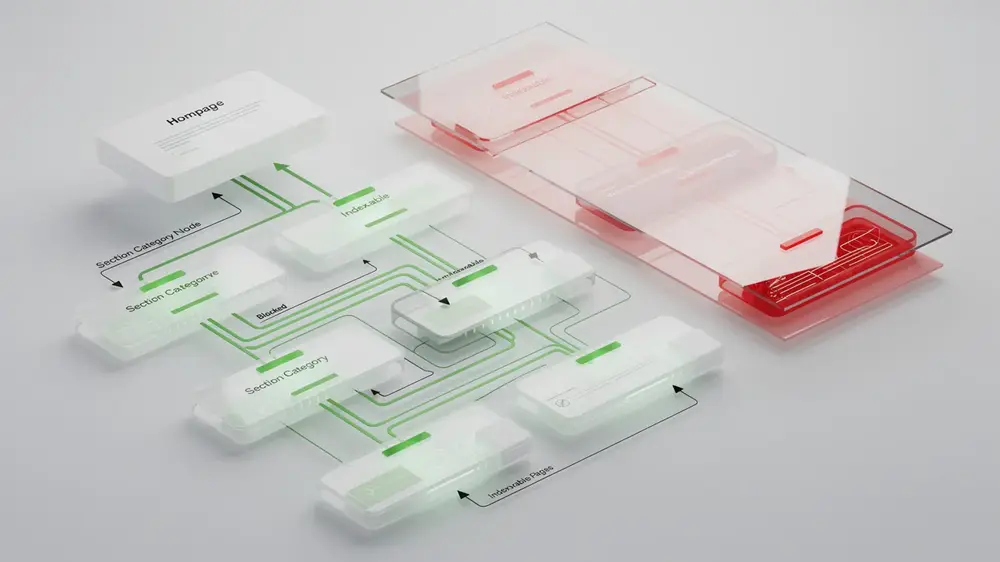

A technical review should also check whether the site is mobile-friendly, crawlable, indexable, and easy for search engines to interpret. This includes XML sitemaps, robots.txt rules, canonical tags, structured data, broken links, redirect chains, image performance, and page templates that create thin or duplicate pages. If the site has performance issues, MOCOBIN’s guide to speed optimization is a useful next step for improving load time and user experience.

On-Page SEO That Supports Users Instead of Forcing Keywords

White hat on-page SEO does not depend on repeating a keyword at a fixed ratio. A better approach is to identify the primary intent of the page, answer it clearly, and use related terms only where they make the explanation more complete. The title tag, meta description, headings, introduction, body copy, image alt text, and internal links should all support the same topic without sounding forced.

For example, a page about white hat SEO can naturally discuss technical SEO, content quality, E-E-A-T, link earning, algorithm updates, and penalties. It does not need to repeat the exact phrase “white hat SEO” in every paragraph. MOCOBIN’s guide to on-page SEO gives a practical framework for aligning page elements with search intent while keeping the writing natural.

E-E-A-T and Content Quality Signals

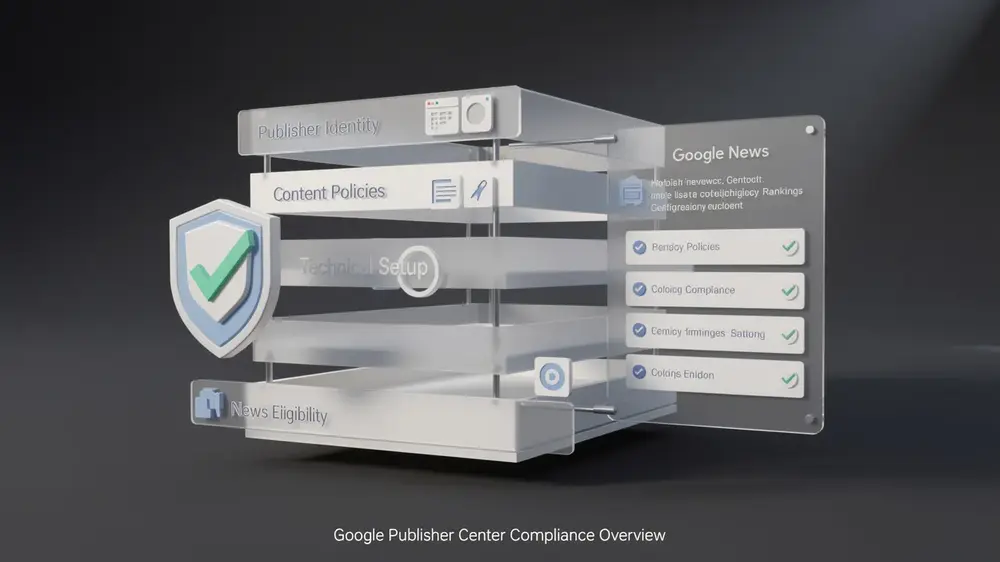

E-E-A-T stands for Experience, Expertise, Authoritativeness, and Trustworthiness. In SEO content, these signals become stronger when the article includes specific examples, accurate definitions, transparent sourcing, author context, and practical guidance that reflects real editorial judgment. A generic article may define the topic correctly, but a stronger article shows how the concept is applied during an actual audit or content review.

For this topic, trust can be improved by explaining what to check in Google Search Console, how to review suspicious backlink patterns, how to update outdated Core Web Vitals references, and how to avoid publishing low-value pages at scale. MOCOBIN’s E-E-A-T guide provides a deeper explanation of how experience and trust signals can be built into SEO pages without exaggeration.

Black Hat SEO Risks: Ranking Loss, Manual Actions, and Recovery Costs

Common Black Hat Tactics That Create Long-Term Risk

Black hat SEO usually begins with the promise of speed. The problem is that fast ranking gains created through manipulation are rarely stable. Common tactics include cloaking, hidden text, doorway pages, spun content, paid link networks, unnatural anchor text patterns, fake author profiles, and pages created mainly to capture search traffic without offering useful information.

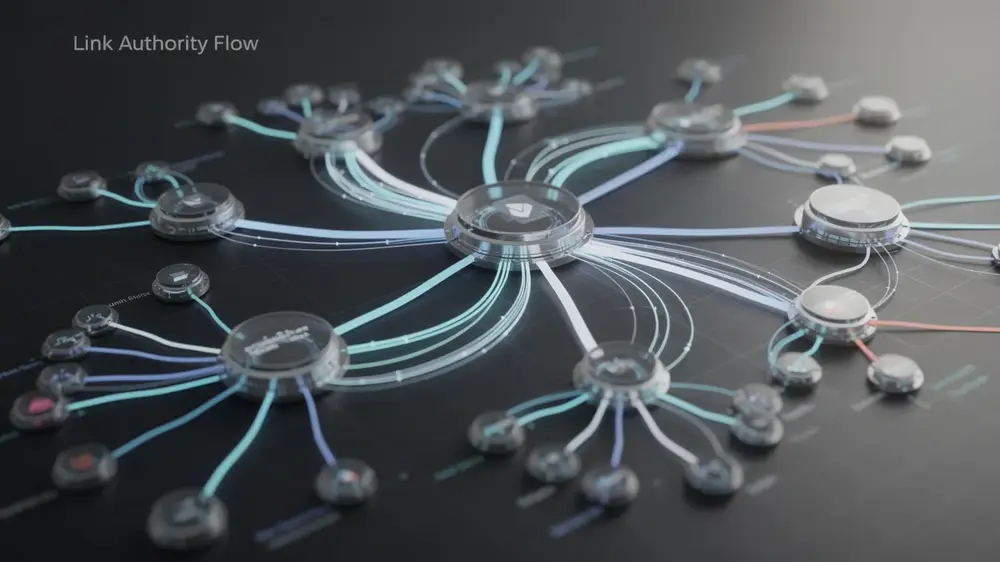

Link manipulation remains one of the most common risk areas. Private blog networks, link farms, automated link placement tools, and low-quality guest post schemes often leave detectable footprints. These may include repeated anchor text, irrelevant linking domains, unnatural publishing patterns, and links from sites with no real editorial standards. A safer approach is to focus on relevance, editorial value, and link quality. MOCOBIN’s guide to link quality explains how to evaluate whether a backlink helps or harms a site’s authority profile.

Why Automated Spam Is Easier to Detect Over Time

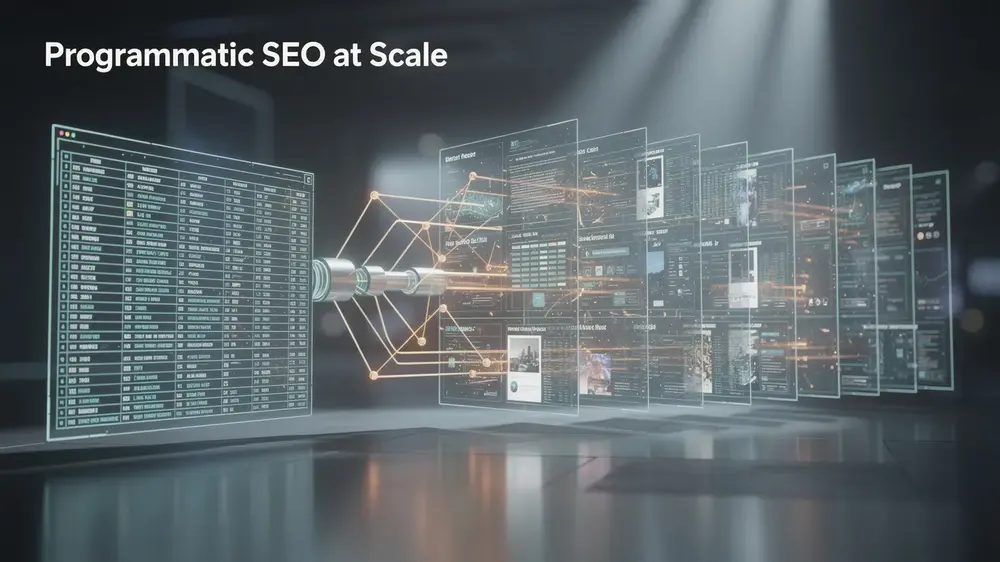

Search engines are increasingly effective at identifying patterns that do not look natural at scale. A single weak page may not be a serious problem, but thousands of thin pages with similar structure, repeated phrasing, weak sourcing, and no original value can create a sitewide quality issue. The same applies to automated link building. When many links use similar anchors, come from irrelevant domains, or appear in unnatural bursts, the pattern becomes easier to evaluate.

Risk also increases when a site tries to fake trust signals. Synthetic author profiles, copied credentials, AI-generated headshots, fabricated reviews, and invented expert quotes can damage credibility if discovered. White hat SEO does not require a brand to pretend to be more authoritative than it is. It requires the brand to show what it knows, explain how it works, and be honest about limitations.

The Real Cost of Black Hat Penalties

The cost of black hat SEO is not limited to a temporary ranking drop. A site may lose organic visibility, receive a manual action, have pages removed from search results, or spend months cleaning up technical and content issues. In serious cases, recovery requires removing manipulative pages, disavowing harmful links where appropriate, improving sitewide quality, and rebuilding trust through consistent publishing and transparent editorial standards.

The risk of black hat SEO is often misunderstood as a short-term traffic gamble. In reality, the larger cost is trust. Once a domain develops a pattern of manipulation, future content may need to work harder to earn the same level of confidence from users, publishers, and search systems.

How to Use AI-Assisted SEO Tools Without Crossing the Line

Where SEO Tools Can Help

SEO tools can support white hat workflows when they are used for research, diagnosis, and editorial improvement. Keyword tools can help identify search demand. Crawlers can find broken links, duplicate titles, missing canonicals, and indexation issues. Content tools can reveal topic gaps or related entities that deserve coverage. Performance tools can show whether a page is slow, unstable, or difficult to use on mobile.

The key is to treat tools as decision support, not as a replacement for judgment. For example, a content optimization score can suggest missing subtopics, but it cannot confirm whether the article is accurate, useful, or trustworthy. MOCOBIN’s Surfer SEO guide explains how optimization tools can support content planning when their recommendations are reviewed carefully instead of followed mechanically.

How Human Review Protects Content Quality

AI-assisted drafting can save time during research, outline development, and first-pass editing. However, the final article should still be reviewed by a person who understands the topic, audience, and risk level. Human review should check factual accuracy, remove generic phrasing, verify external references, add original examples, and make sure the article reflects the brand’s real experience.

For SEO content, the risk begins when large volumes of unreviewed, repetitive, or weakly sourced pages are published mainly to capture rankings. A safer workflow is to use automation for structure and research support, then add expert review, source validation, internal link selection, and practical examples before publication.

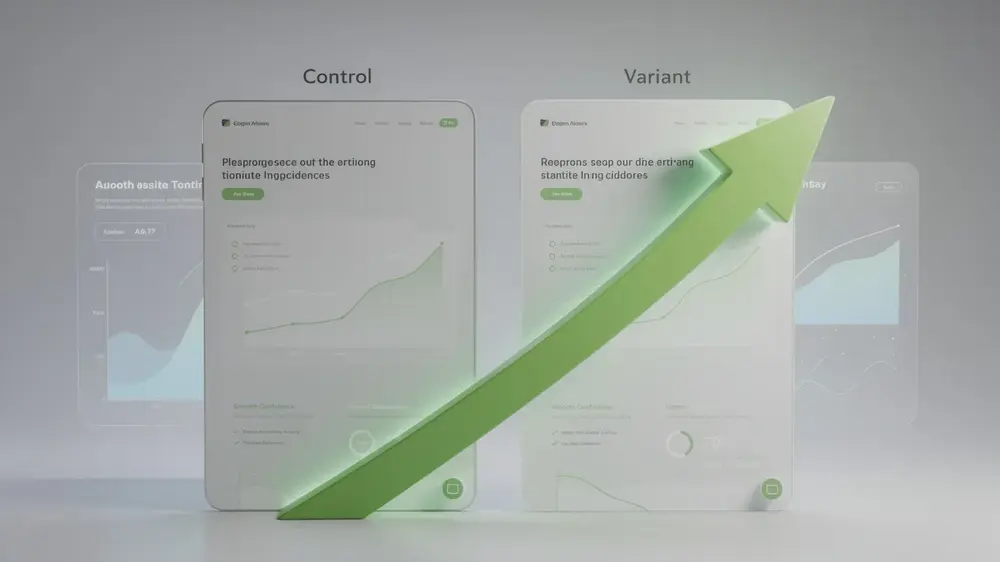

Safe Testing vs Manipulative Testing

Ethical SEO testing measures outcomes without deceiving users or search engines. Safe tests include rewriting title tags, improving meta descriptions, testing clearer heading structures, updating outdated sections, comparing click-through rate changes, and reviewing Search Console data after content improvements. Manipulative testing includes cloaking, hidden content, fake engagement signals, and link schemes designed only to influence rankings.

The safest question to ask before any test is simple: would this change still make sense if search engines did not exist? If the answer is yes, the test is more likely to support users. If the answer is no, the tactic may be drifting into manipulation.

Implementation Priorities for the First 30 Days

Week 1: Technical and Indexation Review

Begin with the technical foundation. Check whether important pages are indexable, confirm that XML sitemaps are submitted, review robots.txt rules, test mobile usability, and scan for broken internal links. Use PageSpeed Insights or Lighthouse to review LCP, INP, and CLS. If the site has many old URLs, redirects, or duplicate templates, check whether canonical tags are helping search engines understand the preferred version of each page.

At this stage, do not focus only on scores. Look for patterns that affect real users: slow templates, unstable layouts, intrusive popups, oversized images, duplicate titles, and pages that return errors. MOCOBIN’s guide to technical SEO can help structure this part of the audit.

Week 2: Content Quality and Search Intent Review

Review the main pages against user intent. Each important page should answer a clear search need, offer enough depth to be useful, and avoid repeating the same generic statements found across competitor sites. Thin pages, outdated statistics, unsupported claims, and duplicated sections should be rewritten or consolidated.

For content that targets competitive informational queries, build the article around practical explanations, examples, limitations, and next steps. MOCOBIN’s guide to SEO content strategy explains how to plan content around topical coverage rather than isolated keyword repetition.

Week 3: Internal Linking and Topical Structure

Internal links should help users move from a broad concept to a more specific explanation. For example, an article about white hat SEO can naturally link to pages about technical SEO, E-E-A-T, link quality, on-page SEO, and content strategy. The goal is not to add as many links as possible. The goal is to create a clear path through related topics.

A strong internal linking structure also helps search engines understand which pages are central to a topic. MOCOBIN’s guide to internal linking explains how to connect related pages without overusing exact-match anchor text.

Week 4: Link Earning and Ongoing Monitoring

After the technical and content foundations are stable, focus on earning links through useful resources, expert commentary, original data, digital PR, and relevant guest contributions. Avoid shortcuts that create link volume without editorial value. A small number of relevant, trusted links is usually more useful than a large number of weak links from unrelated domains.

Monitor progress through Google Search Console, analytics data, crawl reports, and ranking changes over time. Look for improvements in impressions, click-through rate, indexed pages, engagement quality, and the number of pages that earn natural links. If visibility drops, review recent content changes, technical errors, indexing issues, algorithm updates, and backlink quality before assuming a single cause.

White Hat SEO as a Long-Term Growth System

White hat SEO is slower than shortcuts, but it creates a more durable foundation. A site that publishes useful content, maintains clean technical structure, earns relevant links, and updates outdated pages is better prepared for ranking volatility. It also builds trust with users, which is increasingly important as search results include more AI summaries, rich results, and zero-click experiences.

The most reliable SEO teams treat optimization as an ongoing operating system. They audit, publish, measure, update, and improve. They do not rely on one tactic, one tool, or one ranking loophole. Over time, that discipline creates a site that is easier to trust, easier to crawl, and easier to recommend.

For businesses that want sustainable organic growth, the practical choice is clear. Build pages that deserve to rank, keep technical quality high, document editorial standards, and avoid tactics that would be difficult to explain publicly. That is the real value of white hat SEO: not only better rankings, but a stronger website.